Acting out? Points to consider when planning to involve actigraphy measurements into your study design

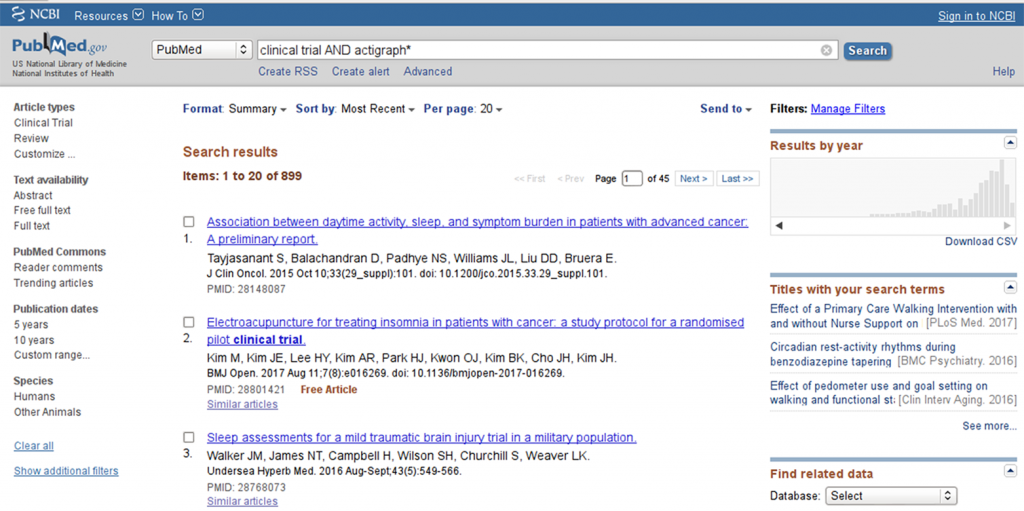

A trivial pubmed search for “clinical trial AND actigraph*” shows a remarkable trend towards increasing utilization of actigraphy tools in clinical research, with a peak beyond the year 2010 (see Figure 1).

This is not surprising, given that digital tools, apps and activity trackers are becoming increasingly available and popular in the broad public, especially in the context of hobby sports and health self monitoring. In fact, whenever discussing the topic of digitalization of healthcare and health research, key words like actigraphy, vital sign monitoring and somnography are named.

From a medical perspective, addressing concepts of physical activity and activity capacity, vital signs (like heart rate or blood pressure), and/or sleep quality in a real-time manner rather than a snap-shot at a visit, make perfect sense.

For instance, a 6-minute-walking test result at a visit might not be able to detect a general decrease in physical activity of the subject. Similarly, pulse and blood pressure are often not representative when assessed at the doctor’s office and might differ drastically when measured in the home setting. Finally, subjective sleep quality data are often difficult to interpret. However, objective sleep parameters (e.g. awakening from sleep, sleep fragmentation) may offer potential insight. Such parameters may in fact open a novel dimension of outcome parameters that could enrich the value story of novel therapies.

That being said, when planning to involve actigraphy measurements into your study design, there are several points to be considered, including issues of the validity of the method, data transfer and data analysis, as well as “trivial” yet vital considerations like memory capacity and battery life of the device.

So let’s dive in.

How do you measure activity?

In most cases, currently used actigraphy devices utilize accelerometry to provide information on the subject’s activity. An accelerometer can be something as simple as a step count device (registering commotion) or part of a complex device, such as an actigraphy device or a cell phone.

Most devices on the market utilize a 3-axial accelerometer, i.e. the device can register movement in all 3 axes (x, y and z). For each axis, the incidence of movement in a given time window can be detected. This means that for each axis the accelerometer registers a condition ”movement” (coded as 1) or “no movement” (coded as 0) for each time bout; the smaller the time intervals the more precise the measurement will be (but will produce more data).

In addition, some devices also allow for detecting the intensity of movement in each axis – so instead of a binary movement detection (movement yes or no) the device scales the intensity of the movements. This allows for setting thresholds to distinguish between voluntary or non-voluntary movements, sleep movement detection or classifying activity bouts into different categories (e.g. mild, moderate and strenuous activity).

How do the data look like?

So say you have a wrist worn accelerometer that detects movement in 3 axes in a binary way per second (i.e. for each second the device is worn, it detects if there is movement in the x, y and/or z axis). For 24h (86400 seconds) and 3 data points per second (a 1 or 0 for each axis, depending on whether there was movement in that axis or not), this means a lot of data. And usually you want to measure not only for a day but for several days or even weeks. A sampling rate of 1 Hz (1 data point per second) thereby is fairly low – in some constellations you would want to assess bouts of 0.5 seconds or even less – meaning even more data. This goes definitely beyond the usual excel based data analysis.

Therefore, in most cases the vendor providing the device will have a software solution where the data are integrated into specific pre-defined parameters, e.g. time spent moving. Usually the provider has validated these algorithms (be sure to check!) and have set certain thresholds to differentiate movement from artifacts.

So the good news is: instead of importing raw accelerometry data into your data base, you would receive integrated data. In the above example you would not have 86400 x 3 data sets, but one number for the time the subject spent in movement and one for the time spent standing still. This in turn means that you need to decide a priori what kind of data integration you want (e.g. time spent in movement over defined periods of time) and if the device-software-package actually can provide these integrations.

Of course there are also devices that do not need external software to integrate the data – the built-in software of the device does the job instead. But be aware that, in this case, you have no chance to define how the data is integrated – you have to accept what the device does. In many cases this may be sufficient; in other settings (scientific questions) it might be completely unsatisfactory.

So the most important question is: What are you up to?

As usual, this is the core question when looking for a method to meet your needs. Ultimately, your scientific question and the nature of your study will determine the device you will need to use. Here is a compilation of questions that you might want to target during the designing phase of your study to narrow down the choice of device considerably:

The “importance” of your endpoint will have a considerable effect on the device choice but also on the entire study logistics. In many studies, actigraphy endpoints are exploratory or “nice to haves” – in such studies the focus is somewhere else and it is completely reasonable to try to fit in the actigraphy “gadget” into a study optimized to show something else. However, if the actigraphy (or sleep and/or vital signs) are important secondary or even primary endpoints, you will need to build the study logistics around the device. Sounds trivial, right? But it is not so easy at all.

For instance: Say you want to assess diurnal activity profiles and night-time sleep continuity in a certain patient population. Sleep continuity may be a parameter of tremendous importance as it could indicate sleep disturbances like frequent awakenings and resulting sleep fragmentation. Just think of all the indications, where this could be relevant – from psychiatric disorders to urological issues you may be able to get data with real impact on the patients’ quality of life.

To assess sleep continuity with a wrist-worn actigraphy device, you need to measure movements at night with a high-enough sensitivity in order to “catch” time windows with increased activity. This in turn means that you will need to increase the sampling rate of your device (i.e. the frequency how often the device records the movements). However, consequently you record more data (as you record data more frequently), which in turn means that the memory capacity and battery life of your device will be shorter. Therefore, in such a setting you will need to build your study infrastructure accordingly, e.g.

- frequent visits, even if it is only to exchange the device (but then you need 2 devices per patient), or

- patients receive kits and devices to charge the battery themselves, and only the data download and transfer are done at the site.

The bottom line

Technological developments and innovations increasingly allow for valuable additions to clinical research, especially to monitor patient’s body functions outside of the study site. Actigraphy, vital sign measurements and somnography are merely the tip of a new and exciting iceberg of opportunities. Already, implantable and skin-worn technologies can measure biomarker levels at any given time of day – adding a whole new level of complexity.

The above considerations indicate that even “simple” parameters like actigraphy can be challenging to implement in a clinical study setting for various reasons. A precise definition of the scientific question and an individualized assessment of requirements and potential benefits are the imperative starting point of identifying the device/ method that will suit the needs of your individual study.