By Martina Mršnik and Dr. Christoph Engler

First experiences – large language models

The importance of artificial intelligence (AI) and its potential impact on the workforce has entered the limelight in the last weeks of the year 2022. News outlets and internet websites reported on a groundbreaking achievement in AI: the development of chatbots based on large language models (LLMs) that can be used by the general public in an intuitive, conversational and user-friendly manner (1–3). The talk of the town has been specifically OpenAI’s ChatGPT, which was, upon its release, made available to the public for free. An additional chargeable version has been released afterwards (4, 5) and on March 14th 2023 OpenAI announced an upgraded and more capable GPT-4 (accepting image and text inputs), which was made available to the paying customers (6). Other companies have been following suit, and have been also integrating chatbots into their respective search engines (e.g. You.com’s YouChat (7, 8)), or Google’s Bard and its upcoming DeepMind’s Sparrow (9, 10)). With OpenAI having Microsoft as a main investor (11), ChatGPT is already being implemented into Microsoft products, such as its search engine Bing, MS Teams and the Office 365 (12, 13).

The basis for LLMs is natural language processing (NLP), a branch of AI concerned with teaching computers to understand text and spoken words in much the same way human beings can. (14, 15).

GPT-3.5, on which ChatGPT is based, was trained to generate human-like text by predicting the respective next word in a sequence based on the context provided by the previous words. While it is trained on a large amount of text data, the exact data or the algorithm details are not publicly known (16).

The first experiences

If used correctly and guided into a specific direction, the LLMs can already deliver quite good texts on a wide range of topics. At present, there are many articles reporting on its applicability in a myriad of different work environments and opinions on how this will change the landscape for different professions (17).

Use in the medical and research communities

Also the medical community has already been actively trying out possibilities of how to implement this technology into medical practice in order to facilitate their work. Since medical professionals are often burdened by a high amount of administrative documentation, the LLMs could be a very useful tool to reduce this administrative workload. As such, LLMs could generate texts for letters to insurance companies, write medical reports, or generate texts that can be used to explain diseases to patients (18–20).

Researchers have also identified many potential uses for their work, from writing a first draft of a scientific manuscript to summarizing already published papers (21). The researchers have even tested the use of LLMs in order to predict dementia from spontaneous speech, as speech is an important parameter in neurodegenerative disorders like Alzheimer’s disease. In a specific study the researchers have shown that text-embedding powered by GPT-3, has proven to reliably distinguish individuals with Alzheimer’s disease from healthy controls. LLMs have therefore a potential to facilitate early diagnosis of dementia and to enable tailored interventions to meet individual needs (22).

Use in clinical research

Even though there have not been many reports on the practical use of LLMs in clinical research so far, it is undeniable that LLMs have the potential to change the everyday work in this field. Especially medical writing, regulatory affairs and programming (e.g. SAS) could be affected in the future (23). For instance, writing a medical background section for a specific clinical indication in a clinical trial protocol, or even writing an entire protocol or informed consent could be facilitated by using text suggestions generated by LLMs. Additionally, reporting results in a clinical study report and writing documents for regulatory submission are two further examples where the AI tool could be used (24).

GKM’s first experiences

At GKM, we have tested the skills of ChatGPT (based on model GPT-3.5) and YouChat for different medical writing tasks for a made-up mock study (see examples below). The offered text was delivered in a matter of seconds, was concise and “made sense” at first glance. However, the ChatGPT’s output lacked crucial elements, including specific references (The knowledge on which it was trained were open source databases, which naturally contain also incorrect data, and only until 2021.), and could therefore only be used, if at all, as a first draft. Moreover, it did not become clear, which sources exactly were used for the text output, and, particularly, if the provided text qualified as original text, or if proprietary text was quoted without disclosing its sources.

When testing YouChat, where the output is supposed to be generated from real-time data, the text was too vague to be used.

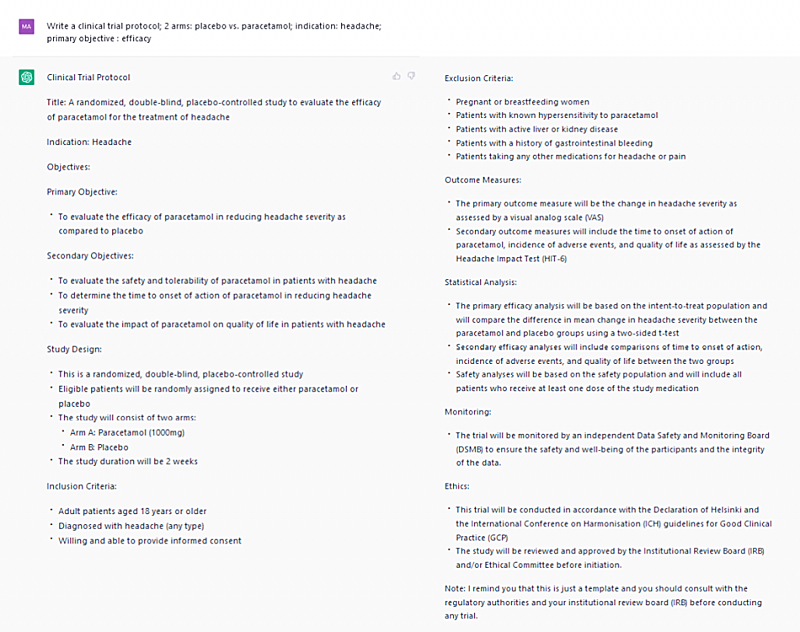

1. Example of a clinical trial protocol written by ChatGPT

An example of a study protocol written by ChatGPT. Several screen shots were merged for better readability. Legend: ![]() input from GKM medical writer,

input from GKM medical writer, ![]() ChatGPT output. Source: https://chat.openai.com

ChatGPT output. Source: https://chat.openai.com

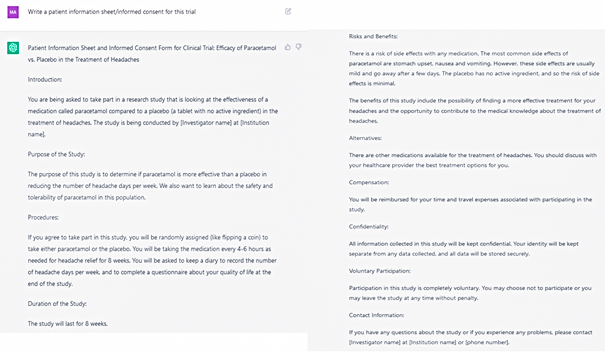

2. Example of a patient information sheet and informed consent provided by ChatGPT

An example of a patient information sheet written by ChatGPT. Several screen shots were merged for better readability. Legend: ![]() input from GKM medical writer,

input from GKM medical writer, ![]() ChatGPT output. Source: https://chat.openai.com

ChatGPT output. Source: https://chat.openai.com

An example of an informed consent written by ChatGPT. Several screen shots were merged for better readability. Legend: ![]() input from GKM medical writer,

input from GKM medical writer, ![]() ChatGPT output. Source: https://chat.openai.com

ChatGPT output. Source: https://chat.openai.com

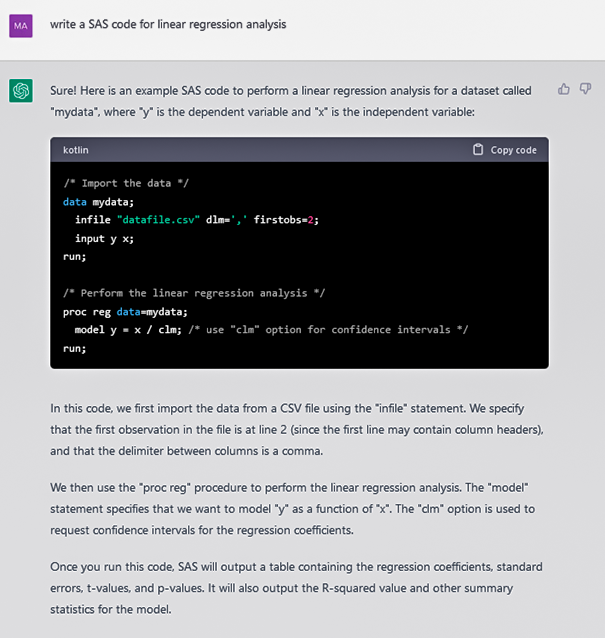

3. Example of a SAS code provided by ChatGPT

An example of a SAS code written by ChatGPT. Several screen shots were merged for better readability. Legend: ![]() input from GKM medical writer,

input from GKM medical writer, ![]() ChatGPT output. Source: https://chat.openai.com

ChatGPT output. Source: https://chat.openai.com

New skills required

Importantly, the users will have to learn a new skill – “prompt engineering”, i.e., to give suitable instructions (so called prompts) in order to reach the desired results quickly. The more precise prompts and data sources are given to the model, the more accurate the information and the final text will be.

(Preliminary) conclusions

Currently, a document created and revised by medical writers is still of a far better quality. Most importantly, medical writers usually have access to current data sources including scientific journals – data to which the LLMs that we have tested did not seem to have access. Last but not least, human medical writers have the capacity to make use of their critical thinking skills, which includes the ability to realize when information is missing or is not robust and may require further verification.

Ultimately, there are a number of significant limitations and concerns regarding the use of LLMs in its current state that need to be considered (25), and on which we will report in the part 2 of this blog post topic series. It is worth noting that at GKM we haven’t tested the GPT-4 yet and our preliminary conclusions are based only on ChatGPT (based on model GPT-3.5) outputs.

Literature Cited:

- reddit. r/ChatGPT – ChatGPT Work Tools – Grant Writing, Medical Writing, Content Marketing; 2023 [cited 2023 Jan 9]. Available from: URL: https://www.reddit.com/search/?q=chatGPT.

- Ruth Fulterer. ChatGPT: Wie die neue KI funktioniert und wo ihre Grenzen sind. Neue Zürcher Zeitung 2022 Dec 9 [cited 2023 Jan 9]. Available from: URL: https://www.nzz.ch/technologie/diese-kuenstliche-intelligenz-kann-lieder-dichten-und-programmier-code-schreiben-was-steckt-hinter-chatgpt-ld.1715918.

- Bovermann P. Was ist künstliche Intelligenz? Ein Interview mit dem Chatbot Chat GPT. Süddeutsche Zeitung 2022 Dec 6 [cited 2023 Jan 9]. Available from: URL: https://www.sueddeutsche.de/kultur/chatgpt-ki-chat-wie-funktioniert-chatgpt-chatgpt-wiki-1.5710407?reduced=true.

- OpenAI. ChatGPT: Optimizing Language Models for Dialogue. OpenAI 2022 Nov 30 [cited 2023 Jan 9]. Available from: URL: https://openai.com/blog/chatgpt/.

- OpenAI. Introducing ChatGPT Plus. OpenAI 2023 Feb 1 [cited 2023 Feb 8]. Available from: URL: https://openai.com/blog/chatgpt-plus/.

- GPT-4; 2023 [cited 2023 Mar 15]. Available from: URL: https://openai.com/research/gpt-4.

- who are you – You.com | The AI Search Engine You Control; 2023 [cited 2023 Jan 17]. Available from: URL: https://you.com/search?q=who+are+you&tbm=youchat.

- you.com. Introducing YouChat — The AI Search Assistant that Lives in Your Search Engine. Medium 2022 Dec 23 [cited 2023 Jan 24]. Available from: URL: https://blog.you.com/introducing-youchat-the-ai-search-assistant-that-lives-in-your-search-engine-eff7badcd655.

- Cuthbertson A. DeepMind’s AI chatbot can do things that ChatGPT cannot, CEO claims. The Independent 2023 Jan 16 [cited 2023 Jan 19]. Available from: URL: https://www.independent.co.uk/tech/deepmind-ai-chatbot-chatgpt-openai-b2262862.html.

- Pichai S. An important next step on our AI journey. Google 2023 Feb 6 [cited 2023 Feb 8]. Available from: URL: https://blog.google/technology/ai/bard-google-ai-search-updates/.

- Capoot A. Microsoft announces new multibillion-dollar investment in ChatGPT-maker OpenAI. CNBC 2023 Jan 23 [cited 2023 Jan 27]. Available from: URL: https://www.cnbc.com/2023/01/23/microsoft-announces-multibillion-dollar-investment-in-chatgpt-maker-openai.html.

- Lardinois F. Microsoft launches the new Bing, with ChatGPT built in. TechCrunch 2023 Feb 7 [cited 2023 Feb 8]. Available from: URL: https://techcrunch.com/2023/02/07/microsoft-launches-the-new-bing-with-chatgpt-built-in/?guce_referrer=aHR0cHM6Ly93d3cuZ29vZ2xlLmNvbS8&guce_referrer_sig=

AQAAACSWpmHb8S_KteHt6awXilnzWyDEQwxtAVOJP5m_goIov4u3Jnak_EQgotixKQ_

DphpVYcbQtTCnTbMFyAIRZ36ljTCeSZXAppBScUcZ83w90RVwWBshiFFHeeKPuzF2clfv

BQ9Jts1bG9zmG0zMwxbWLY-5TsUIkzL6cPYmF4zy&guccounter=2. - Herskowitz N. Microsoft Teams Premium: Cut costs and add AI-powered productivity. Microsoft 365 Blog 2023 Feb 1 [cited 2023 Feb 8]. Available from: URL: https://www.microsoft.com/en-us/microsoft-365/blog/2023/02/01/microsoft-teams-premium-cut-costs-and-add-ai-powered-productivity/.

- What is Natural Language Processing? | IBM; 2023 [cited 2023 Jan 9]. Available from: URL: https://www.ibm.com/topics/natural-language-processing.

- Dashevsky J. The Future of Natural Language Processing in Healthcare. Workweek Media 2022 Dec 11 [cited 2023 Jan 9]. Available from: URL: https://workweek.com/2022/12/10/the-future-of-natural-language-processing-in-healthcare/.

- Enterprise AI. What is GPT-3? Everything You Need to Know; 2023 [cited 2023 Jan 11]. Available from: URL: https://www.techtarget.com/searchenterpriseai/definition/GPT-3.

- Frederick B. Will ChatGPT Take Your Job? Search Engine Journal 2023 Jan 16 [cited 2023 Jan 17]. Available from: URL: https://www.searchenginejournal.com/will-chatgpt-take-your-job/476189/#close.

- What Can ChatGPT Do For Your Practice? MedpageToday 2022 Dec 19 [cited 2023 Jan 17]. Available from: URL: https://www.medpagetoday.com/special-reports/exclusives/102312.

- Artificial Intelligence: How the technology behind ChatGPT could have big implications in the healthcare sector; 2023 [cited 2023 Jan 17]. Available from: URL: https://www.medtechpulse.com/article/insight/chatgpt-could-have-big-implications-in-the-healthcare-sector.

- 6 Potential Medical Use Cases For ChatGPT – The Medical Futurist; 2023 [cited 2023 Jan 17]. Available from: URL: https://medicalfuturist.com/6-potential-medical-use-cases-for-chatgpt/.

- Chandha S. Exploring GPT-3’s capabilities in research. Fast Company 2023 Jan 12 [cited 2023 Jan 17]. Available from: URL: https://www.fastcompany.com/90832145/exploring-gpt-3s-capabilities-in-research.

- Agbavor F, Liang H. Predicting dementia from spontaneous speech using large language models. PLOS Digital Health 2022; 1(12):e0000168. Available from: URL: https://journals.plos.org/digitalhealth/article?id=10.1371/journal.pdig.0000168.

- Martin K. Should ChatGPT be in every medical communicator’s toolbox? medtextpert 2022 Dec 19 [cited 2023 Mar 6]. Available from: URL: https://www.medtextpert.com/chatgpt-for-medical-communications/.

- z3GA8kS5MocfiyLTQN7. ChatGPT, a chatbot with a myriad of potential uses, possibly including regulatory affairs – Pharmavibes; 2022 [cited 2023 Jan 17]. Available from: URL: https://www.pharmavibes.co.uk/2022/12/19/chatgpt-a-chatbot-with-a-myriad-of-potential-uses-possibly-including-regulatory-affairs/.

- Phee M. 5 reasons why pharmaceuticals shouldn’t use ChatGPT for marketing | drcom. drcom 2023 Jan 13 [cited 2023 Jan 17]. Available from: URL: https://www.drcomgroup.com/5-reasons-why-pharmaceuticals-shouldnt-use-chatgpt-for-marketing/.

Picture: @SomYuZu/AdobeStock.com